Post 3 of the Nexus series: bringing design decisions into the project’s indexed knowledge.

The catalog has decisions in it

Wholly new forms of encyclopedias will appear, ready made with a mesh of associative trails running through them… (Vannevar Bush, “As We May Think”, 1945)

In the last post, Typed links and the catalog introduced the catalog as a metadata layer that tracks indexed documents and the typed edges between them. This post looks at one particular kind of document, the Research-Design-Review (RDR), the structured note Nexus uses to capture non-trivial design decisions, investigations, or larger-scale architecture. RDRs are Nexus’s shape of the structured-decision-record pattern Michael Nygard put on the map in 2011 (Documenting Architecture Decisions), now a broad community practice around ADRs. Nexus adds research findings with explicit evidence classification, an actual lifecycle (gate, accept, close, sometimes abandon) rather than a static document, and integration with the indexed corpus. The value of indexing that reasoning alongside the code that implements it is what I’ll ramble on about for the rest of the post.

Something to remember, though: RDRs are “implemented” on top of Nexus. While they’re a foundational use case for Nexus, it’s important to remember they aren’t required; Nexus operates quite fine without them. However, the integration value of having RDRs indexed and woven into the same knowledge web is… immense.

Traction control between people and LLMs

I reached a point in agentic coding where I could create vast amounts of code, weave together complex things, and execute on large-scale plans and architectures. Predictably, as the capabilities and functionality grew, so did the complexity of managing these massive dynamic systems. And the complexity problem isn’t just mine – it’s not just a human problem. It affects the LLMs as well as the organization – the teams of people and their LLMs together. Managing information complexity and dynamic systems evolution is everyone’s problem.

And so I came to model this as a problem of coupling between systems: between individuals driving these powerful systems, and across the interactions of teams. The latter is particularly interesting. How does one effectively cooperate with other powerfully augmented individuals to – at a bare minimum – get things done? We can create so much so fast that it becomes extremely difficult to maintain a coherent view of what’s going on, much less feel in control of the process.

The way I imagined it was driving on slick ice. Everything is great until you want to change your vector, and then you start slip-sliding away. Agentic coding feels like much the same thing, and cooperating in teams where each individual is a powerfully augmented GAI Centaur can be likewise a precarious interaction.

So I think of Nexus as a kind of traction control between the people working on a project and the LLMs working alongside them. It does two things that matter.

First, it keeps human intent and LLM work coupled through structure, so the work doesn’t slip when either side would otherwise spin out. A typical failure mode: the human’s context overflows, the LLM guesses where it should ask, or the work drifts from the decisions that shaped it. Suddenly neither the LLM nor the developers know what’s real any more, where they’re headed, or how the heck they got into this mess.

Second, the same structured coupling creates provenance: a curated record of how the project’s state evolved, not just what it currently is. It lets us reconstruct what happened (within reason, of course) and so both understand the current state (think of a “rollup” of all the design decisions, backtracking, fixes, branches, etc.) and how it got there. What were the critical decisions made last week, or last year, that directly relate to pressing issues such as a site-wide meltdown happening right now?

You can think of Nexus as a version-controlled command-and-control integration platform and oracle.

- Version-controlled because the substrate is in git. Every RDR, every bead, every typed link is a file in the repo. Diffable, auditable, cloneable. A new team member comes up to speed by indexing the latest checkout. Simply let Nexus index the repo and the project’s institutional memory is local. Nexus can also install git hooks so the local index is always current with whatever’s checked out.

- Command and control because an RDR is where people commit intent and the lifecycle is how Nexus tracks its execution, weaving the decision and the work into the knowledge base. Beads records what gets done: it’s an excellent project-management system that Nexus uses to represent the execution plan. Re-indexing repositories and collections updates the link graph to match what actually happened. When the design doesn’t survive contact with reality, abandonment is a status change the graph respects, not a quiet rewrite of history. An LLM working alongside the project over time integrates new knowledge the same way a new team member does: by reading the indexed record of structured interactions.

- Integration because code and reasoning sit in the same corpus. Nexus makes the structure discoverable and walkable through typed links. The decision that shaped

operators/dispatch.pyis two edges away from the code. Nothing has to be rediscovered. With semantic and full-text search layered together, everything is a query away. - Oracle because

nx_answeris available to any agent, skill, or person. Ask what did we decide about plan matching and why? and the response is an account drawn from the live-decision slice of the corpus, withchash:<sha256>citations that drop you into the exact paragraph of the exact RDR. Structure survives rearrangement as things evolve. Decisions remain auditable. Design stays linked to the code that carries it through development and the rest of the lifecycle.

Why structure the conversation

The systems we work with are bigger than working memory, whether yours, an LLM’s, or a PR reviewer’s three weeks from now. Complexity and working-memory limits are real constraints. Without trackable specifications, designs, and plans, purpose drift sets in quickly: new problems surface mid-implementation, side-quests accrete, and you end up coding your way out of corners instead of designing your way around them. Part of the problem is control; another part is just getting your arms around the scope of what you’re solving and how it’s evolving.

You start slip-sliding at exactly the moment you’re engaging and need the most control.

An RDR is a structured way to engage with an LLM on a design problem, an evolving architecture, a nasty research issue. It’s an abstract kind of traction control: a shared shape we and the LLMs can grab, manipulate, and orient ourselves around, so the conversation doesn’t evaporate into chat history or fade into a pile of beads that never leave your local disk.

The sections of an RDR are general enough and yet still useful: problem, research findings, options considered, decision, optional post-mortem. Once the conversation has a shape, the catalog can reason about it and people can put it in context. Status becomes filter metadata. Research findings carry their own evidence quality. Cited code becomes implements edges. Citations to other RDRs become typed references. The conversation gets woven into the rest of the knowledge base through curated links.

The rule is mostly about timing. Iterate on the RDR as much as you need during drafting; that’s what drafting is for. At acceptance it becomes the reference for what was decided. If implementation proves the design wrong, don’t rewrite the accepted RDR to match what shipped; abandon it and draft a new one with what you learned. The chain of RDRs represents the entire messy progression of complicated work, on complicated systems, doing complicated things. Iteration on a decision lives in the chain, not in any single accepted document.

The document and indexing lifecycles handshake

An interesting part of the Nexus lifecycle isn’t the states, per se. It’s the touchpoints between the document’s lifecycle and the corpus’s indexing lifecycle. It’s the coupling between the document state, the project state, the repository state and the organizational state (linking, topics). It’s a dynamic, evolving melange of complex information.

An RDR lands in docs/rdr/ and nx index repo registers it in the catalog, chunks it, and embeds it. As the draft evolves, deltas are re-indexed. Every src/... mention in the body becomes an implements edge into code. Every cited RDR or paper lands as a typed reference. Agents working on adjacent problems traverse the catalog and the draft surfaces on its own. When implementation lands and the code shifts, re-indexing updates the edges to match reality. When the design is abandoned or superseded, one frontmatter line changes and the graph adjusts: the abandoned RDR stays on record, but forward-walks from the code skip past it to the live artifact. Backward-walks still reach the abandoned reasoning for readers who want it.

There’s additional complexity because Beads (the issue tracker Nexus pairs with) carries the execution layer alongside the RDRs. So in Nexus, both RDRs and beads are catalog entries with tumblers; queries walk from a closed RDR through its implementation history without leaving the substrate. We get a full 360° view of the project, what’s happening and how it integrates across code and even across repositories.

We can scale the RDR to the stakes. We don’t have to accept the limitations at either end of the spectrum of managing change. A one-paragraph bug-fix RDR is still a valid RDR. The shape is the same whether the decision is a line-level fix or a subsystem rewrite; only the ceremony changes. We can integrate much of what we do, see, and investigate, without having to worry about it.

Cumulative design, focused retrieval

A long-lived project isn’t a single coherent design. It’s a trail; a network of paths. It’s decisions made, decisions revised, proposals abandoned, directions superseded. Rewriting the older RDRs to match the newer decisions would erase the reasoning chain that makes the corpus valuable, and would be dishonest. Audit trails contradict by construction.

That puts pressure on retrieval. Plain grep for “plan matching” across Nexus’s own 90+ RDRs returns an abandoned proposal next to the decision that shipped, with no way to tell them apart. Nexus handles it by layering focusing mechanisms across its storage tiers. T2 full-text search is exhaustive and exact, right for audit scans. T3 semantic search is relevance-ranked and embedded, right when the vocabulary has drifted. Status filters scope to live decisions, or to dead branches when that’s the question. Typed links (superseded_by, implements) let a reader walk from a stale artifact to the current one. Evidence tags discount time-rot on old assumed findings. Topic taxonomy surfaces the current neighborhood of a concept even when terminology has moved on.

None of these is the answer on its own. It’s a defense-in-depth strategy. A simple embed-and-return-top-k retrieval would cheerfully hand you an abandoned design as authoritative. Nexus stacks the modes: exhaustive when the task demands it, focused when the question is well-scoped, graph-walkable when the why matters more than the what.

A walk through the retrieval-layer arc

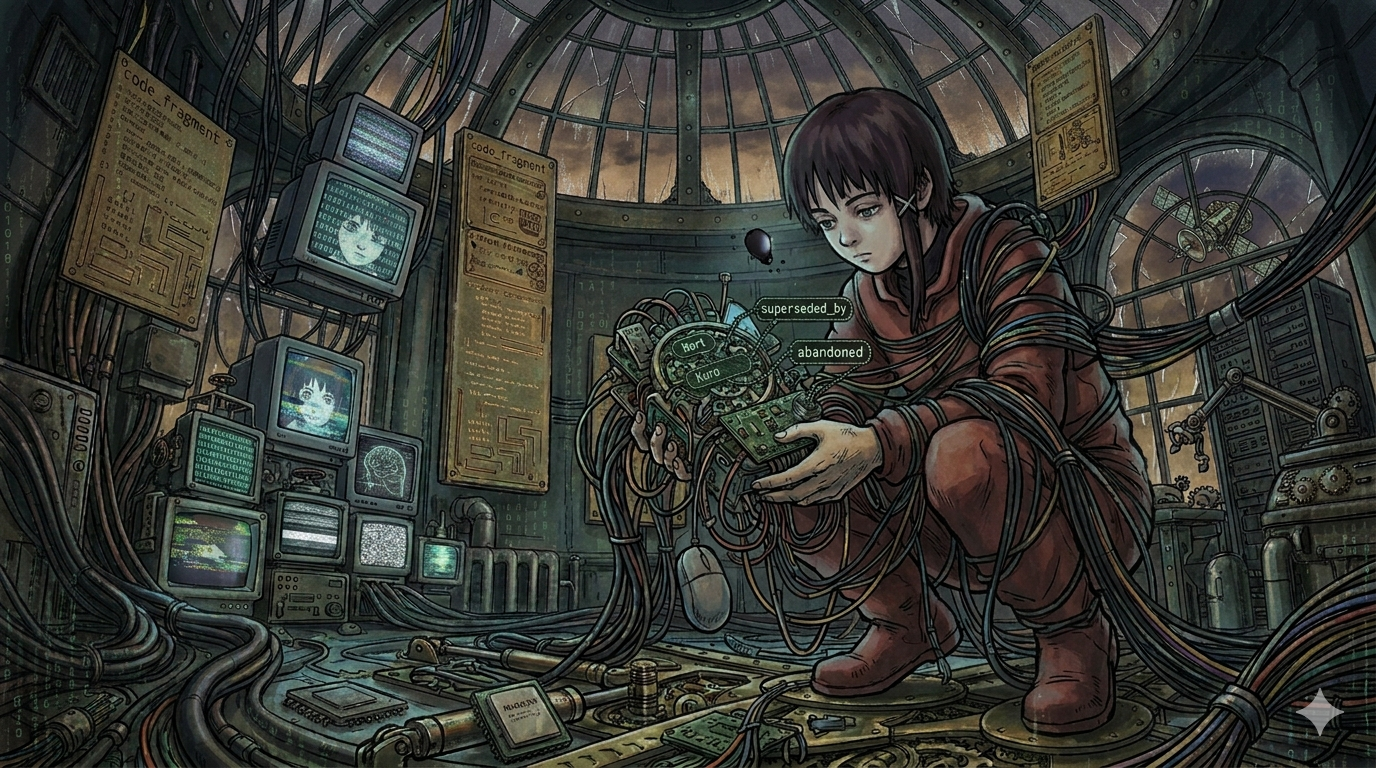

The most recent design effort in Nexus was a three-RDR arc on plan-centric retrieval. RDR-078 framed it and took the standard path: drafted, accepted, implemented, closed. RDR-079 was the proposed implementation, accepted and then abandoned a day later when contact with the existing operator-dispatch code surfaced an architectural mismatch. RDR-080 was the consolidated successor that shipped. RDR-079’s frontmatter now carries:

status: abandonedsuperseded_by: "nexus.operators.dispatch (PR #168)"The abandoned middle RDR is the most interesting artifact. A future reader walking backward from operators/dispatch.py lands on RDR-079, sees the abandoned status, follows superseded_by to the actual code+PR, and reconstructs the real story without anyone having to write a narrative. One file (src/nexus/mcp/core.py) appears in both RDR-078’s and RDR-080’s implements lists, which is a real signal for a reviewer: is the newer code respecting the contract the framing RDR established, or replacing it?

The lifecycle recorded what happened. The catalog made it walkable. Nexus makes it comprehensible and usable.

Going deeper

- RDR Overview: what RDRs are, when to write one, evidence-quality classification.

- RDR Workflow: Create, Research, Gate, Accept, Close.

- RDR Nexus Integration: how the catalog, link graph, and topic taxonomy amplify RDRs.

- Project RDR Index: every RDR in the Nexus project with status and type.

- Beads: the paired issue tracker.

What’s next

Up next: Plans, not replanning. The argument for runtime query plan templates with empirical promotion, against cold-start LLM replanning on every query. Where the nx_answer function stops being magic and starts becoming a saved, named, measurable plan you can inspect, version, and improve.

Follow along with the same setup as Nexus by Example: uv tool install conexus, nx doctor, nx index repo . inside any git checkout.

Leave a Reply to Nexus, by example – TensegrityCancel reply